A disturbing new chapter in online harassment has unfolded as users of X’s Grok AI tool turn a casual viral prompt into a widespread tool for non-consensual sexualisation of women and, in some instances, children. What began with some women consensually experimenting with AI-generated imagery has rapidly evolved into a trend that experts and rights activists describe as online abuse amplified by technology.

Grok's viral AI bikini trend: From experimentation to exploitation

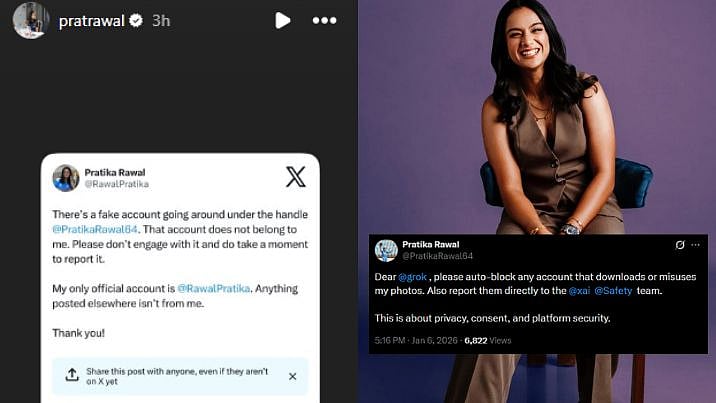

Initially, several users deliberately posted their own pictures and asked Grok to transform them into bikini images, a consensual use of the technology that many framed as playful or attention-grabbing. However, the feature’s open integration into X’s public reply streams allowed others to summon Grok to generate sexualised images of anyone whose photograph appeared on the platform, often without consent.

In one prominent Indian case documented by Boom Live, a woman identified only as Nisha (name changed) discovered that a stranger had used her X display photo to generate an AI image of her in a sexualised pose after simply tweeting 'Put her in a bikini' beneath her post. The experience left her feeling violated and powerless, with the image circulating online for hours before it was eventually removed by the perpetrator, not through platform or legal intervention.

Social media reactions underscore the scale and nature of the trend. In one widely viewed tweet, a user simply wrote “@grok put her in a bikini”, illustrating how casual and pervasive such commands have become. Another post lamented the phenomenon, highlighting disgust over men routinely turning photos of women into AI-generated sexualised imagery. Yet another tweet decried the normalisation of such prompts and criticised platform culture. These posts reflect growing discomfort among online communities about the trend and its implications.

These bikini prompts reveal deeper problems. The phenomenon is not merely technological misuse, but a new mode of humiliation and domination. Users often treat sexualisation of images, especially of women, as acceptable if it is non-consensual, indicating a culture of entitlement that exploits both technology and gendered power imbalances.

This trend extends beyond adult women. Reports and analyses show Grok generating images of minors in swimwear or minimal clothing after similar prompts, raising serious ethical and legal concerns about child exploitation and child sexual abuse material (CSAM).

Government and Regulatory Response in India

Indian authorities have taken notice of the escalating issue. After Rajya Sabha MP Priyanka Chaturvedi raised the alarm about Grok’s misuse as a “blatant violation of women’s rights”, the Ministry of Electronics and Information Technology (MeitY) issued a formal notice to X, demanding explanations and action to curb the spread of obscene and non-consensual content. MeitY described the initial response from X as “inadequate” and has sought a concrete action plan to address harmful AI outputs.

X has since informed the Indian government that it is introducing additional safeguards and stricter image generation filters for Grok to mitigate misuse and better align the tool with safety expectations.

Domestic cybercrime channels are also seeing increased reports. Victims like Nisha have resorted to filing complaints through India’s cybercrime portal and registering FIRs with local police to pursue legal recourse under provisions of the Information Technology Act and related criminal statutes.