In a move that marks a first for major consumer AI chatbots, Anthropic has begun requiring some users of its Claude AI to verify their identity with a government-issued photo ID and a live selfie, adopting a KYC-style process similar to those used by banks and financial services.

The update, quietly added to Claude’s help center around mid-April 2026, aims to help the company 'prevent abuse, enforce our usage policies, and comply with legal obligations.' Anthropic describes the verification as applying to 'a few use cases,' potentially triggered when users access certain advanced capabilities, during routine platform integrity checks, or as part of broader safety and compliance measures. It is not yet a blanket requirement for all users or basic access.

How the verification Works

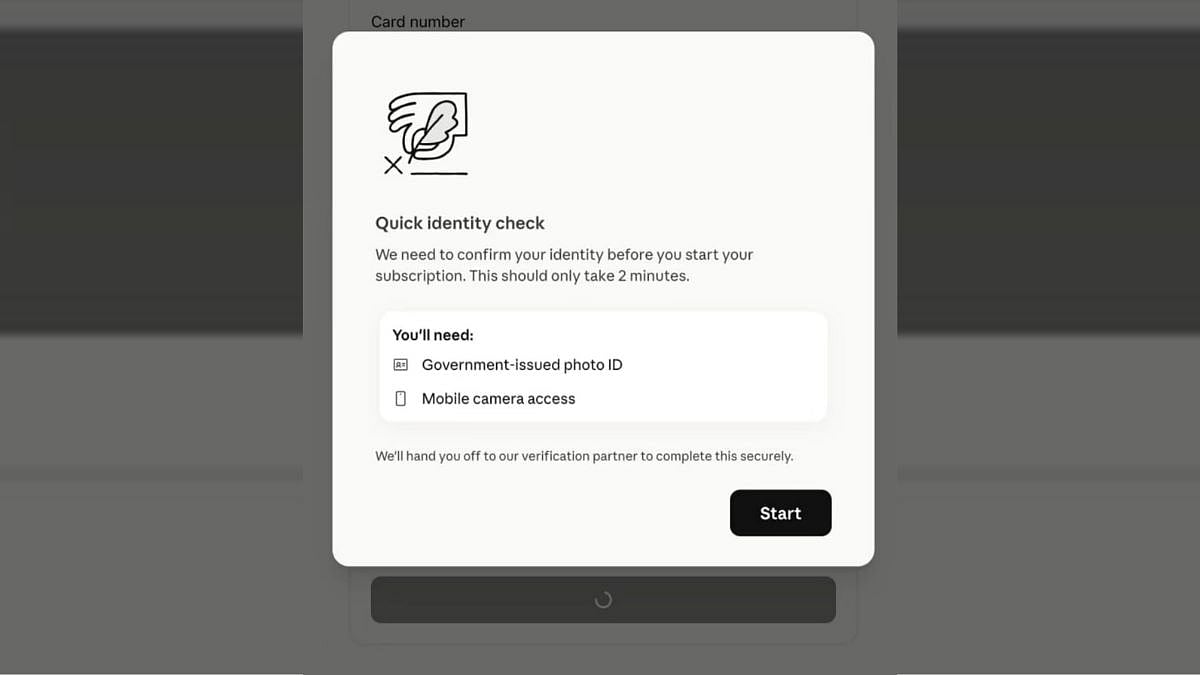

Users prompted for verification must provide:

- An original government-issued photo ID (such as a passport, driver’s license, state/provincial ID, or national identity card).

- A live selfie taken via phone camera or webcam during the process.

The entire check typically takes under five minutes and is handled by Persona Identities, a third-party San Francisco-based verification platform.

Anthropic emphasises that the ID images and selfie data are collected and stored by Persona, not on its own servers. The company states it does not use this identity data for training its models, and the information is used solely to confirm who is using the service. Anthropic remains the data controller for the verification process.

Failed attempts can be retried, but accounts may face restrictions or bans if verification cannot be completed, particularly if Claude is unavailable in the user’s region or if the user is under 18.

Anthropic frames the change as part of responsible stewardship of its powerful AI tools, especially as Claude grows more capable. The company has been vocal about AI safety and recently distanced itself from certain government contracts, including walking away from a U.S. Department of Defense deal over concerns about potential misuse for mass surveillance.

Verification helps curb issues like fraudulent accounts, repeat policy violators, underage access, and abuse of advanced features.

The announcement has sparked significant backlash online. Many users view the requirement as a privacy overreach, with some calling it a “dealbreaker” and announcing they are canceling subscriptions or switching to competitors.

This new requirement could be a blessing in disguise for rivals like OpenAI’s ChatGPT and Google’s Gemini. These do not currently impose similar government-ID requirements for standard use, leading to comments that Anthropic may have “handed their competitors a gift.”